AI Experiments

AI Prompt Management System

Study Focus: AI-Assisted Development, Vibe Coding, Prompt Structure, Systems Thinking

Tools: ChatGPT, Claude Code, and Git Hub.

Context: As I worked with more advanced AI prompts and workflows, managing versions and reusing prompts across projects became a recurring problem. I also wanted to strengthen my AI skills by designing and building a real product instead of focusing only on prompt writing.

Goal: Build a single-user AI Prompt Management system that works locally, supports organization and search, and can be moved or hosted easily. A secondary goal was to test a structured collaboration between ChatGPT and Claude.

Process: I defined the scope and constraints with ChatGPT, then translated the requirements into structured Markdown prompts for Claude. I also asked Claude to create a Markdown-based prompt template to standardize instructions and reduce ambiguity, which improved consistency across iterations. The system was built in clear phases, with architecture reviews before coding and design refinements after hands-on use.

Results: The project resulted in a functional prompt management system with local storage, tagging, search, and version control. The process also improved the reliability and predictability of AI-generated code through clearer prompts and reviews.

Learnings: AI produces better results when prompts are structured with clear goals and constraints. Using ChatGPT and Claude together helped shape the problem, review outputs early, and catch scope misalignment. This approach reduced errors and kept the work aligned across phases.

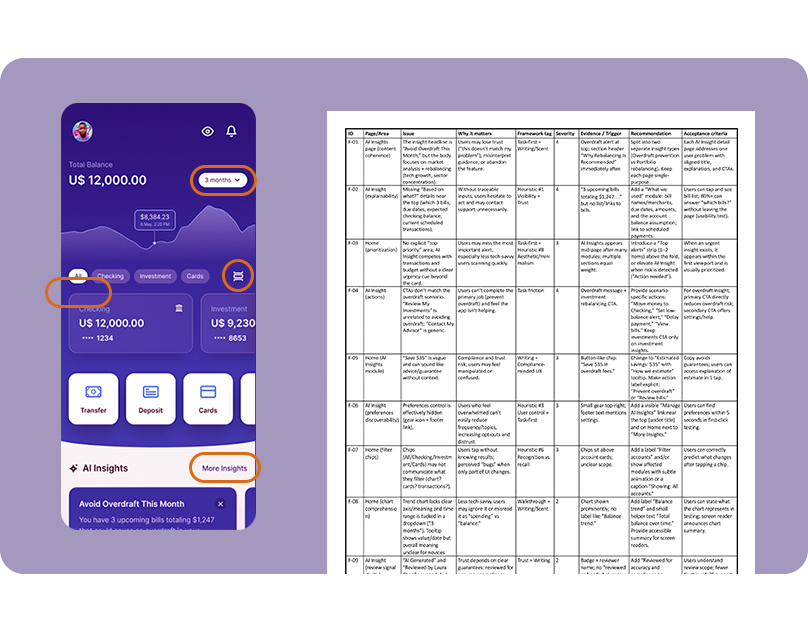

AI Design Critique

Study Focus: Usability and accessibility checks, design workflows.

Tools: ChatGPT and Figma.

Context: Design reviews often rely on manual effort and happen late in the process. This project explored whether AI could provide structured, early feedback to help designers catch issues sooner and improve overall design quality.

Goal: Evaluate whether AI can deliver clear, method-based design critiques that meaningfully support designers, reduce avoidable issues, and improve iteration quality while still relying on human judgment.

Process: A structured prompt was created combining heuristics analysis, accessibility review, and task evaluation. Screens were uploaded, the analysis was generated, and the output was reviewed as a prioritized report with issues, severity, reasoning, and suggested fixes.

Results: The generated report was detailed and largely accurate. Most findings were relevant and actionable, and could be easily integrated into the design workflow before iterations. This added immediate impact by reducing the number of issues carried forward and improving the overall quality of the project. About 3% of the findings were false alarms. Feedback on interactions was limited due to static screens, reinforcing the need for designer validation.

Learnings: AI is effective as an early critique and prioritization tool. It improves speed and coverage, but human review is still required to assess interaction, intent, and contextual nuances.